Debugging Matplotlib (Part 5)

Posted by Mark on May 16, 2022 at 07:27 | Last modified: March 22, 2022 16:42Last time, I laid out some objectives to more simply re-create the first graph shown here. I will continue that today.

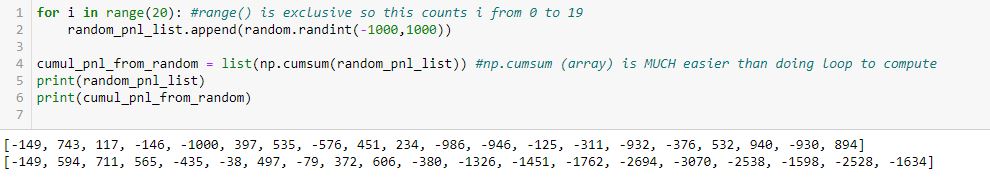

Objectives #2-3 are pretty straightforward:

In L2, the arguments for random.randint() are inclusive. You can see a -1000 as part of the first list at the bottom.

Also, note the second line of output is also a list. np.cumsum() generates an array, but the list constructor (in L4) converts this accordingly. Using np.cumsum() does this in one line as opposed to a multi-line loop, which could be used to iterate over each element of the first list subsequently adding to the last item of an incrementally-growing cumulative sum list.

Not seen are a couple additional modules I need in order to use these two methods:

> import random

> import numpy as np

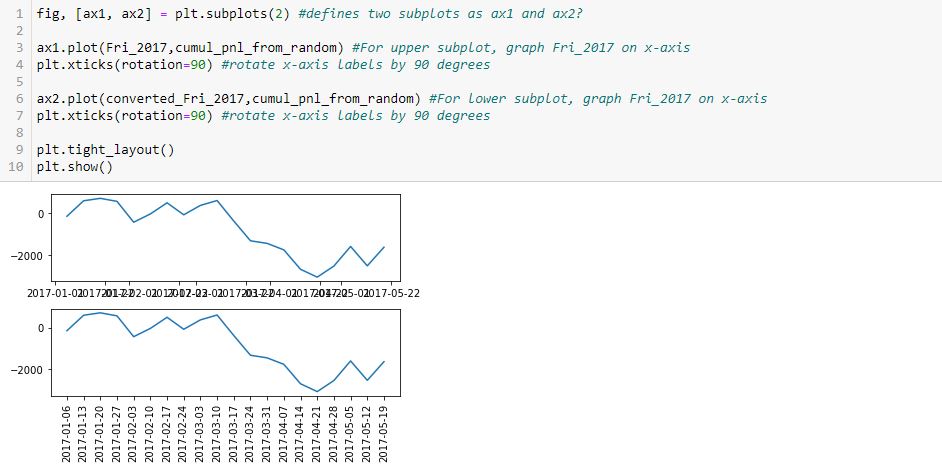

I am going to skip ahead to objective #5 for the time being: the graph. Here is my first [flawed] attempt:

As you can see, the x-axis labels are rotated in the lower subplot but not rotated [and thereby rendered illegible] in the upper. Why do L4 and L7 not accomplish this for both subplots, respectively?

After googling this question a few different ways and looking through at least 20 different posts, the best response I found is this one from Stack Overflow: [no matter where the line appears in the code] “plt manipulates the last axis used.” Here, the last subplot is rotated but the first is not. What confuses me here is where plt.xticks() appears. In order to get the output seen, does the first subplot get rotated by L4 only to be unrotated with generation of the subsequent [last] axis at L6? Does L7 then rotate the x-axis labels on the subsequent [last, or lower] subplot?

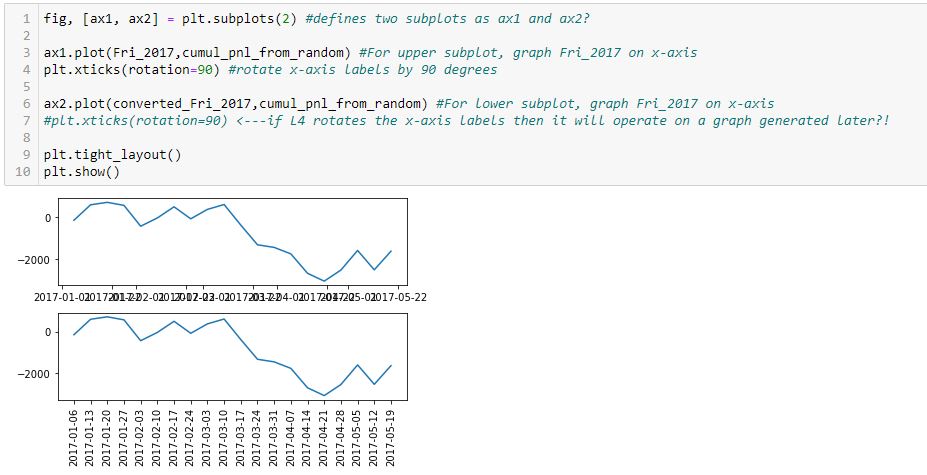

I find it extremely counterintuitive for a later line in the program to undo an earlier one because the earlier graph has already been drawn. I can test whether L7 actually rotates the x-axis labels in the lower subplot by commenting it out:

Indeed, this output is the same as the previous, which suggests a later line does not undo an earlier one. Rather, the earlier line effects a graph drawn later.

How does that work, exactly?

Categories: Python | Comments (0) | PermalinkDebugging Matplotlib (Part 4)

Posted by Mark on May 13, 2022 at 07:24 | Last modified: March 22, 2022 10:27Last time, I resolved a couple complications with regard to the x-axis. Today I want to tackle the issue of plotting a marker at select points only as shown in the first graph here.

Here is a complete account of what I have in that graph:

- Cumulative PnL on the y-axis that is available every week

- Datetime x-axis

- Markers on the line plot on where new trades begin

I cobbled together some solutions from the internet in order to make this work. I finally realized it’s not about plotting the line and then figuring out how to erase certain markers or plotting just the markers and figuring out how to connect them with a line. Rather, I must plot the line without markers first, and then plot all points (with marker or null) on the same set of axes:

> axs[0].plot(btstats[‘Date’],btstats[‘Cum.PnL’],marker=’ ‘,color=’black’,linestyle=’solid’) #plots line only

> for xp, yp, m in zip(btstats[‘Date’].tolist(), btstats[‘Cum.PnL’].tolist(), marker_list):

> axs[0].plot(xp,yp,m,color=’orange’,markersize=12) #plots markers only (or lack thereof)

This took days for me to figure out and required a paradigm shift in the process.

Does it really needs to be that complicated? I am going to re-create the graph with a simpler example in order to find out.

Here’s a rough list of objectives for coming up with data to plot:

- Generate a list 2017_Fri 20 consecutive Fridays starting Jan 1, 2017.

- Generate random_pnl_list of 20 simulated trade results from -1000 to +1000.

- Generate cumul_pnl_from_random, which will be a list of cumulative PnL based on random_list.

- Randomly determine trade_entries: five Fridays from _2017_Fri.

- Plot cumul_pnl line.

- Plot markers at trade_entries.

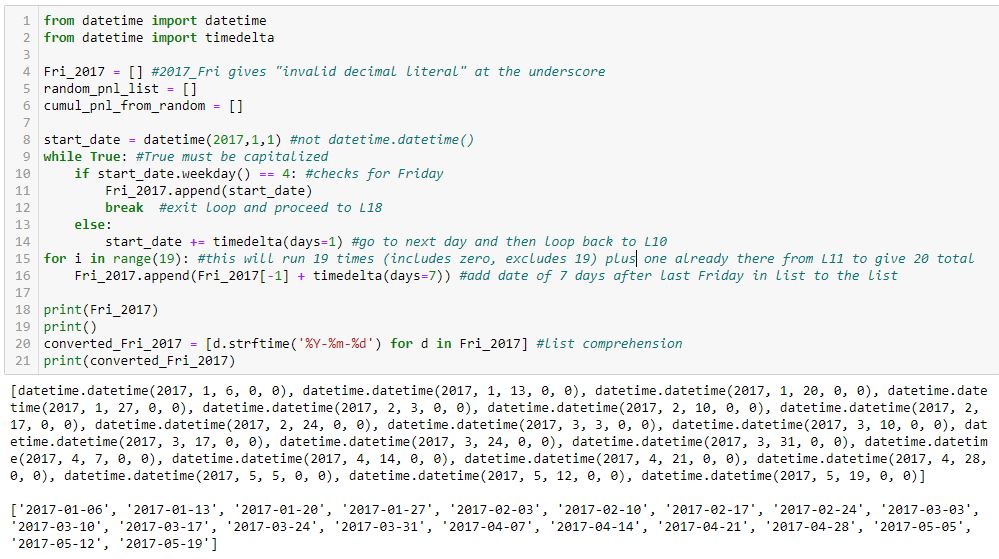

This accomplishes objective #1:

I tried to comment extensively in order to explain how this works.

Two lists of dates are shown in the output at the bottom. The first list is type datetime.datetime, which is a mess. The second list is cleaned up (type string) with L20.

I will continue next time.

Categories: Python | Comments (0) | PermalinkDebugging Matplotlib (Part 3)

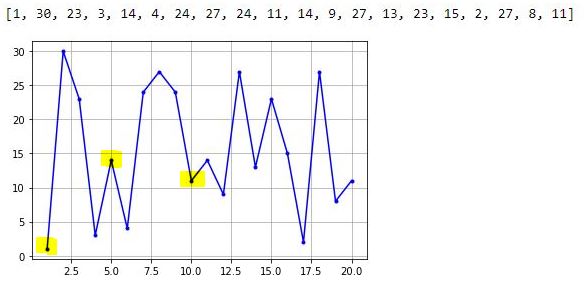

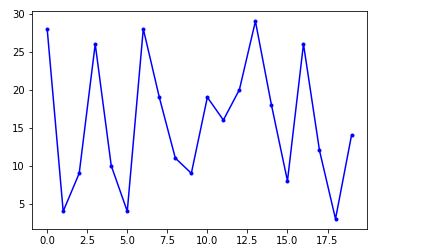

Posted by Mark on May 10, 2022 at 07:07 | Last modified: March 16, 2022 14:44Today I resume trying to fix the x-values and x-axis labels from the bottom graph shown here.

As suggested, I need to create a list of x-values. Even better than a loop with .append() is this direct route:

> randomlist_x = list(range(1, len(randomlist + 1))

This creates a range object beginning with 1 and ending with the length of randomlist + 1 to correct for zero-indexing. The list constructor converts that to a list. Now, I can redo the graph:

> fig, ax = plt.subplots(1)

>

> ax.plot(randomlist_x, randomlist, marker=’.’, color=’b’) #plot, not plt

> plt.show()

The one thing I can see is decimals in the x-axis labels, which is not acceptable. Beyond that, I don’t have much clarity on the graph so I will add the following to show grid lines:

> plt.grid()

I can now clearly see the middle highlighted dot has an x-value of 5. Counting up to x = 10 for the right highlighted dot, I have confirmation that each dot has an x-increment of 1. The highlighted dot on the left is therefore at x = 1. I have therefore accomplished my first goal from the third-to-last paragraph of Part 2.

To get rid of the decimal x-axis labels, I need to set the major tick increment. This may be done by importing this object and module and following later with the lines:

> from matplotlib.ticker import MultipleLocator

> .

> .

> .

> ax.xaxis.set_major_locator(MultipleLocator(5))

> ax.xaxis.set_minor_locator(MultipleLocator(1))

The major and minor tick increments are now 5 and 1, respectively, and the decimal values are gone.

Thus far, the existing code is:

> from matplotlib.ticker import MultipleLocator

> import matplotlib.pyplot as plt

> import numpy as np

> import pandas as pd

> import random

>

> randomlist = []

> for i in range(20):

> n = random.randint(1,30)

> randomlist.append(n)

> print(randomlist)

>

> randomlist_x = list(range(1, len(randomlist)+1))

> fig, ax = plt.subplots(1)

>

> ax.plot(randomlist_x, randomlist, marker=’.’, color=’b’) #plot, not plt

> ax.xaxis.set_major_locator(MultipleLocator(5))

> ax.xaxis.set_minor_locator(MultipleLocator(1))

>

> plt.grid()

> plt.show()

I will continue next time.

Categories: Python | Comments (0) | PermalinkDebugging Matplotlib (Part 2)

Posted by Mark on May 5, 2022 at 07:20 | Last modified: March 15, 2022 11:40In finding matplotlib so difficult to navigate, I have been trying different potential solutions found online. Some have an [undesired] effect and others do nothing at all. Instances of the latter are particularly frustrating and leave me determined to better understand. Today I will begin explaining what I aim to do with visualizations in the backtesting code.

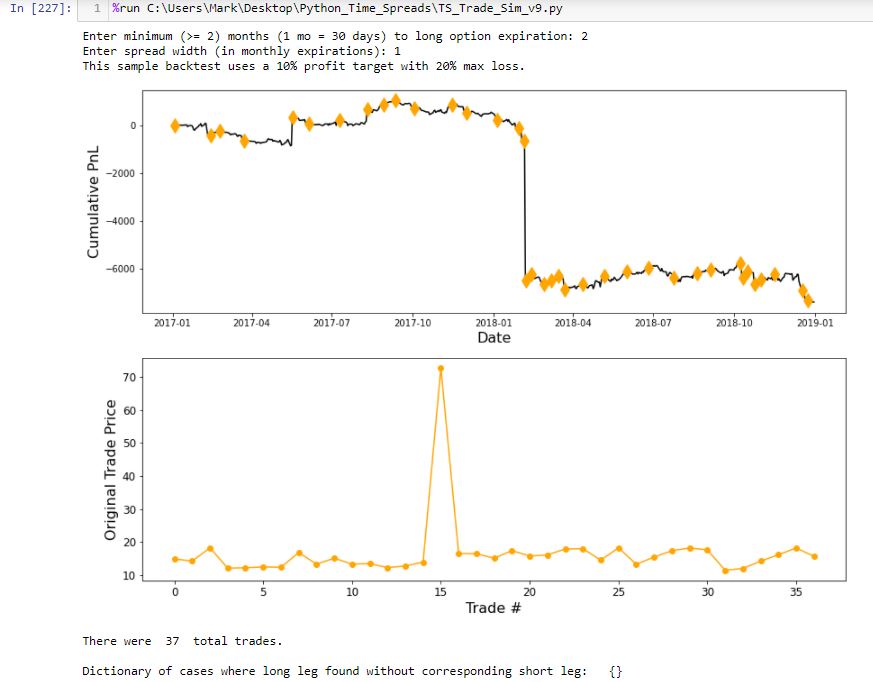

The first graph I wish to present is cumulative backtested PnL on a daily basis. I create a dataframe column ‘Cum.PnL’ to calculate difference between current and original position price. To each entry in this column, I add realized_pnl. Whenever a trade is closed at profit target or max loss, I increment realized_pnl by that amount.

Graphing this results in a smooth, continuous cumulative PnL curve except for one extreme gap. Closer inspection reveals the price of one option on this particular day over $50 more than it should be, which translates to the $5,000+ loss seen here:

The lower graph shows initial position price and makes it clear that something is way out-of-whack with trade #15. I manually edit that entry in the data file.

The upper graph includes an orange diamond whenever a new trade begins. I endured days of frustration trying to figure out how to do this. To better understand my solution, I will create a simpler example devoid of the numerous variables contained in my backtesting code and advance one step at a time to avoid quirky errors.

First, I will import packages (or modules):

> import matplotlib.pyplot as plt

> import numpy as np

> import pandas as pd

> import random

Next, I will create a random list:

> randomlist = []

> for i in range(20):

> n = random.randint(1,30)

> randomlist.append(n)

> print(randomlist)

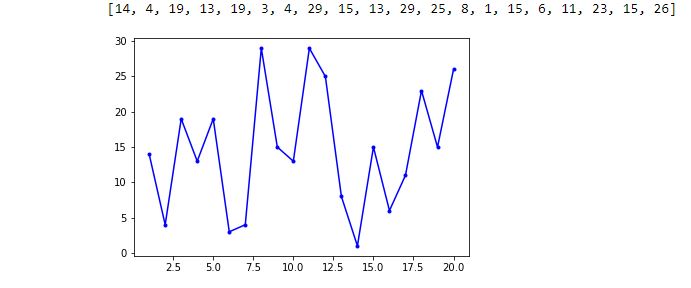

This prints out a 20-element list of random integers between 1 and 30. A few iterations got me this:

[30, 24, 3, 29, 20, 7, 29, 25, 25, 20, 15, 24, 8, 13, 9, 14, 19, 30, 1, 5]

I like this example because it has both 1 and 30 in it to demonstrate inclusivity at each boundary.

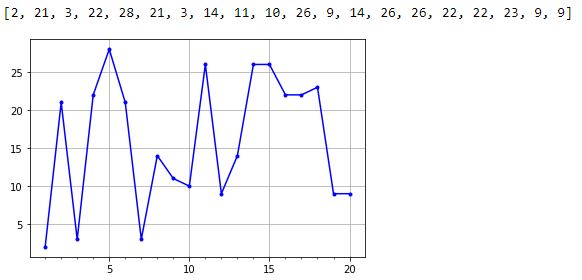

Next, I will invoke matplotlib’s “simplicity” by generating a graph in just three (not including the blank) lines:

> fig, ax = plt.subplots(1)

>

> ax.plot(randomlist, marker=’.’, color=’b’) #plot, not plt

> plt.show()

So far, so good!

I now want to fix two issues with the x-axis. Because I did not specify x-values, these are plotted by default as zero-indexed order in the data point sequence. This assigns x-value 0 to the first data point, 1 to the second, etc. I want 1 for trade #1, 2 for trade #2, etc. The other issue is that because all x-values are integers, I do not want any decimals in the x-axis labeling.

My solution will be to create a list of numbers I want plotted on the x-axis. The downside to this, however, is loss of matplotlib’s automatic scaling, which it sometimes does very well as seen on the ‘Date’ axis above. Maybe this will still work with integers. We shall see.

I will continue next time.

Categories: Python | Comments (0) | PermalinkDebugging Matplotlib (Part 1)

Posted by Mark on May 3, 2022 at 07:28 | Last modified: March 11, 2022 16:28Matplotlib is giving me fits. In this blog mini-series, I will go into the What and try to figure out the Why.

The matplotlib website says:

> Matplotlib is a comprehensive library for creating static, animated, and interactive

> visualizations in Python. Matplotlib makes easy things easy and hard things possible.

The DataCamp (DC) website says:

> Luckily, this library is very flexible and has a lot of handy, built-in defaults that

> will help you out tremendously. As such, you don’t need much to get started: you

> need to make the necessary imports, prepare some data, and you can start plotting

> with the help of the plot() function! When you’re ready, don’t forget to show your

> plot using the show() function.

>

> Look at this example to see how easy it really is…

I have found matplotlib to be the antithesis of “easy.” I am more in agreement with this previous DC paragraph:

> At first sight, it will seem that there are quite some [sic] components to consider

> when you start plotting with this Python data visualization library. You’ll probably

> agree with me that it’s confusing and sometimes even discouraging seeing the

> amount of code that is necessary for some plots, not knowing where to start

> yourself and which components you should use.

Using matplotlib is confusing and certainly discouraging. Many things may be done in multiple ways, and compatibility is not made clear. Partially as a result, I think some things do absolutely nothing. Support posts are available on websites like Stack Overflow, Stack Abuse, Programiz, GeeksforGeeks, w3resource, Python.org, Kite, etc. Questions, answers, and tutorial information spans over a decade. Some now “deprecated” solutions no longer work. Also adding to the confusion are some solutions that may only work in select environments, which is not even something I see discussed.

What I do see discussed is how easy and elegant matplotlib is to use. I seem to be experiencing a major disconnect.

Maybe the difference is the simplicity of the isolated article examples in contrast to the complex application I am trying to implement. Why would my application be more complex than anyone else’s, though? I am trying to develop a research tool where the results are unknown. While different from writing sample code to present already-collected data, that would be a weak excuse. Discovering previously-hidden relationships is a common motivation behind data visualization.

To learn programming with matplotlib, my rough road has left me only one path: debug the process to understand why my previous attempts have failed. That is where I will start next time.

Categories: Python | Comments (0) | Permalink2021 Performance Review (Part 11)

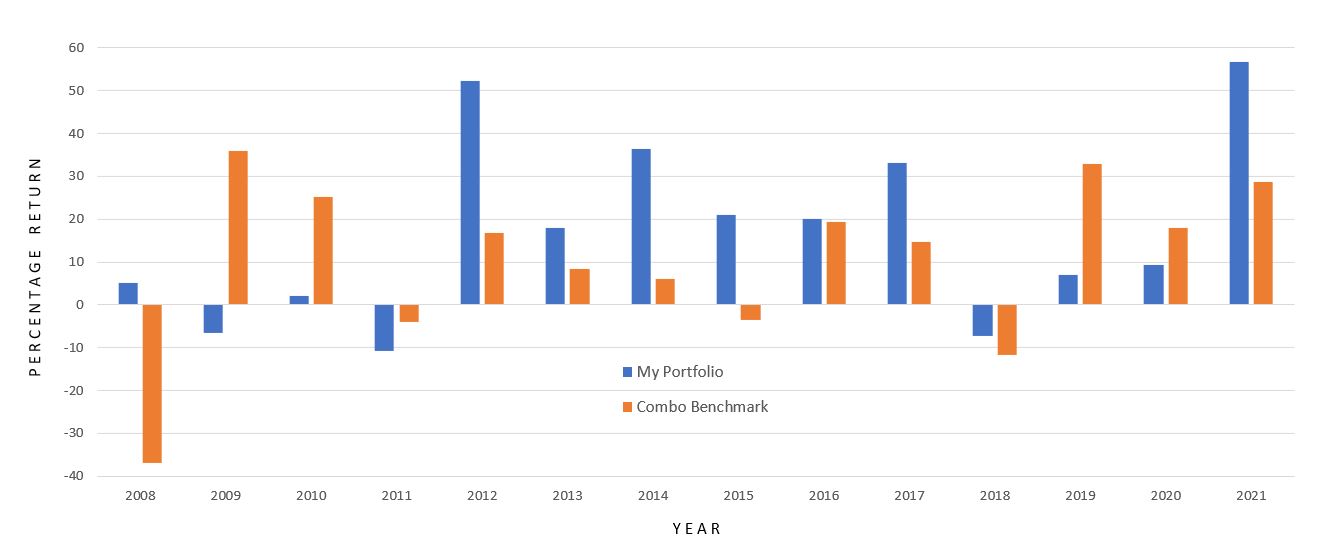

Posted by Mark on April 29, 2022 at 06:46 | Last modified: February 16, 2022 13:36To conclude this mini-series, I offer the annual time frame along with some additional miscellany still germane to my pursuit.

Here is my performance by year versus the combo benchmark:

As mentioned in the second-to-last paragraph of Part 10, calendar years conceal my maximum drawdown. That is not the intent and the data must be sliced differently to show everything, which I have done.

My trading has taken several different forms over the years.

I assumed management of my personal brokerage account in 2001 and implemented stock-screening strategies I researched and developed. I had a decent first month but was hit by the 2001 correction thereafter. My stock investments were mostly short-term although I had a couple that went on to become spectacular long-term winners. Those played a large part in allowing me to leave my full-time job at the end of 2007.

In 2008, I began to trade options full-time. Making money in 2008 is one of my finest accomplishments. I changed strategy a few times over the first five years and suffered a couple catastrophic losses from which I was able to rebound, but the pain was certainly real (see this blog mini-series). The Big Question going forward was how I would navigate the periodic pain?

This review has updated my performance to include the last five years of trading. During that time, I phased out one brokerage account and started with another. For a couple years I was trading different strategies in the two accounts. 2018 included two 10% corrections in the same year and explosive volatility. I did some soul-searching because of the unlimited-risk nature of my approach, and I spent most of 2019 in cash backtesting and refining to address liquidity concerns along with absolute risk. Soon after jumping back into the market, I got an exit signal in Feb 2020. The hit I took was mitigated (see last sentence of this second paragraph) as the market proceeded to sell off an additional 27%.

The bar graph above reveals how much I missed in 2019 being mostly out of the market. 2019 could have conceivably been another 2021, which ended up as my best year ever. Sometimes it hurts to be on the sidelines.

Despite the pain, this blog mini-series provides an answer to the Big Question: quite well with benchmark outperformance in excess of 6% p.a. since 2008. I hope to continue outperforming going forward, but there are no guarantees. Making this happen will take some luck, some discipline, and lots of effort.

Thus concludes my 2021 performance review showing my live-trading experience with all its bumps, bruises, and scars: 100% out-of-sample data, to be sure. None of the backtesting I have done over the years has been included here.

Categories: Accountability | Comments (0) | Permalink2021 Performance Review (Part 10)

Posted by Mark on April 26, 2022 at 06:50 | Last modified: February 15, 2022 11:01I left off discussing my ugly 2013 speculative GC trading experiment gone awry. While I paid a lot in “market tuition” to learn some important lessons, at issue here is that the combo index benchmark does not include GC.

Including the GC trades artificially lowers my relative return if coincident GC performance is not incorporated to the benchmark. I’m tempted to just leave it because I believe in erring on the side of conservatism. That’s really not fair to me, though. Besides, as previously discussed I have already assessed the tax penalty on more than I actually made.

The following will result in a combo benchmark (RUT / GC / SPX) with the adjective “index” removed:

- My speculative GC trades run from 2/13/2013 – 5/17/2013.

- According to GC prices at execution, the futures drop from 1648.1 to 1359.6: -$288,500/contract.

- Futures margin during my largest position size is $5,400/contract (increased shortly thereafter per CME website).

- Futures options use SPAN margining, but I do not have historical data on the SPAN stress factors.

- With GC crash volatility, I would guess stress factors to be +/- 15% (if not 18% or more).

- At -15%, requirements are similar to the futures margin mentioned above; this allows me to calculate ROI.

- The combo benchmark performance is then a weighted average of GC and RUT.

- I lump all GC losses into Apr 2013, which is when my big portfolio drawdown (DD) occurred.

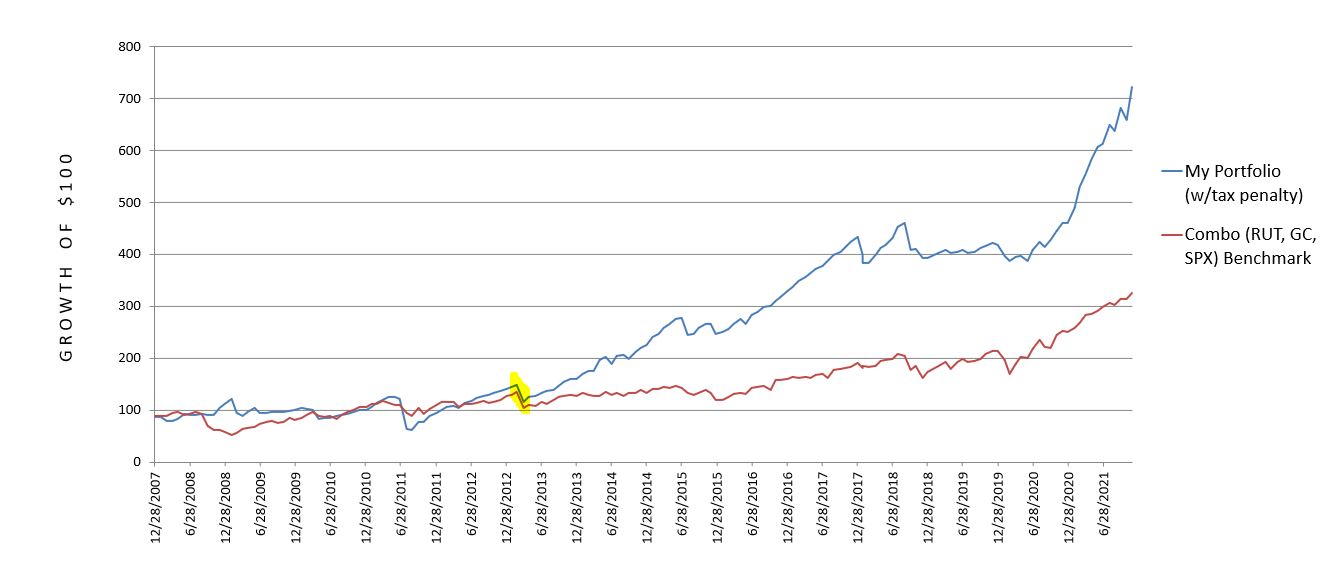

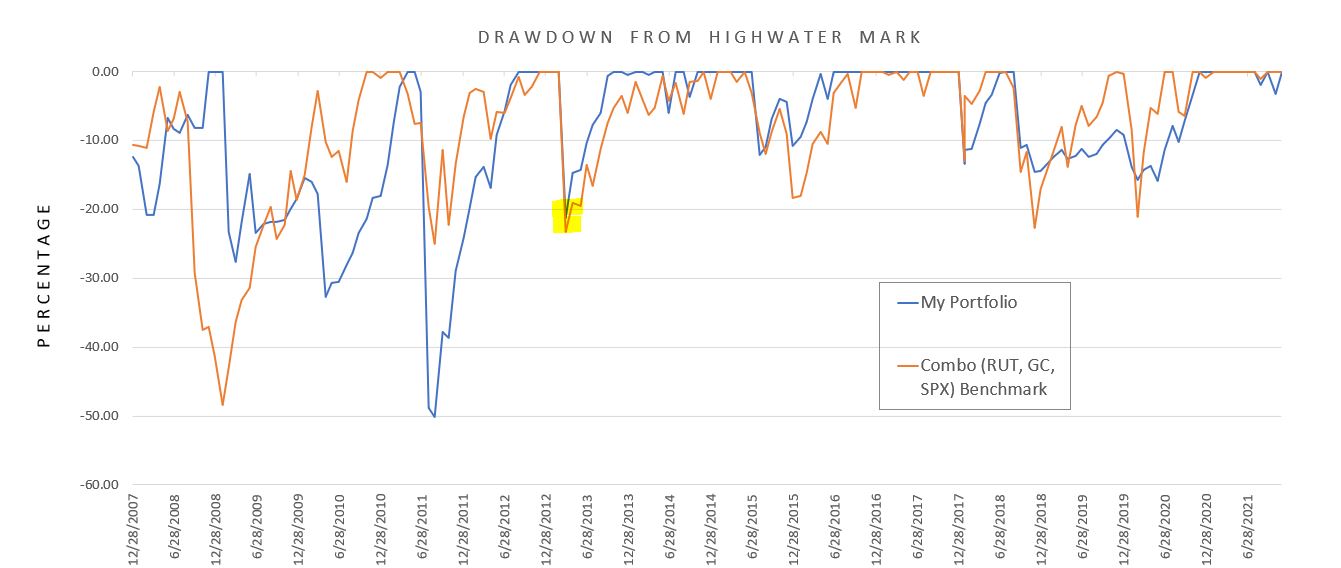

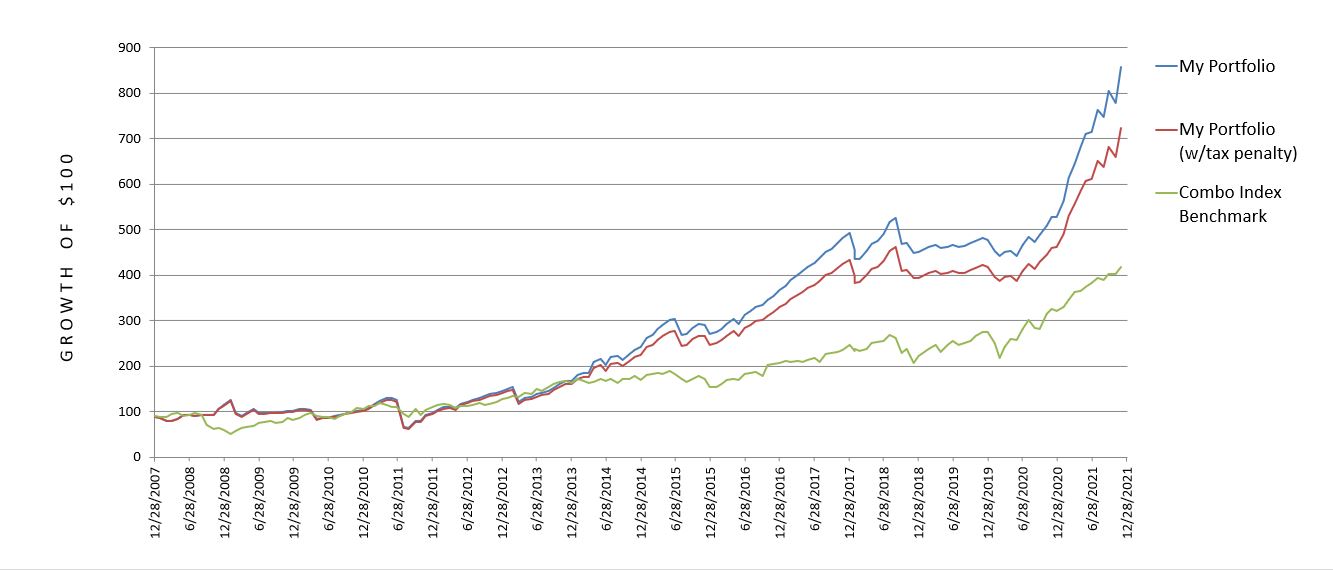

Here is the revised (compare with graphs shown in Part 7) 2008 – 2021 cumulative performance comparison:

My annualized return remains at 15.17%, but the combo benchmark worsens from 10.75% to 8.80%. This boosts my outperformance from 4.42% p.a. to 6.37% p.a. and is a more accurate comparison.

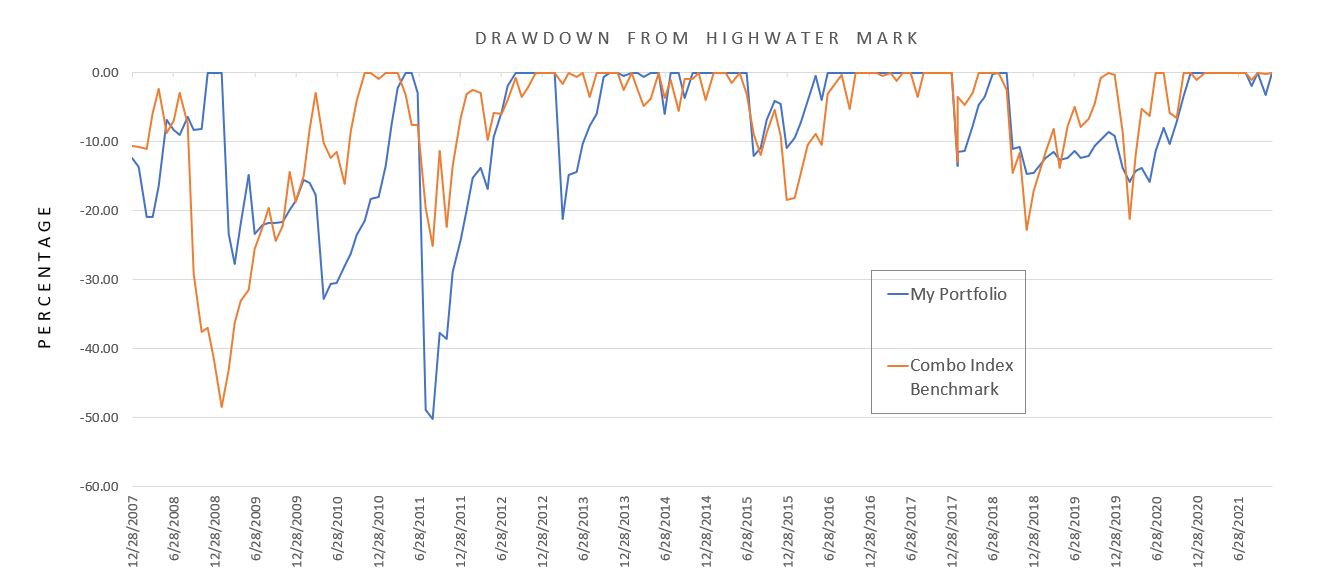

Here is the new DD comparison with major revision highlighted.

My mean DD remains at 8.86%, but the combo benchmark worsens from 7.17% to 7.92%. The max DD is unaffected.

I want to comment on something written here, which I was alarmed to see in preparing for the current performance review:

> I very much like the fact that my worst year was limited to just over a 10% loss.

> This is the kind of stability somewhat lacking to the upside. I experienced three

> catastrophic losses over the last nine years and the overall performance suggests

> I have bounced back quite well.

This is somewhat misleading. Yes, the look from a calendar-year perspective does speak to rapid recovery. It also paints a prettier picture than my current DD calculations that use a monthly time frame. Max DD of 50.10% for me vs. 48.39% for the combo benchmark better reflects the mental anguish suffered at the time.

I will continue next time.

Categories: Accountability | Comments (0) | Permalink2021 Performance Review (Part 9)

Posted by Mark on April 21, 2022 at 07:16 | Last modified: February 14, 2022 14:10I left off last time reviewing two of my largest drawdowns to consider whether such big losses might be avoided in the future. Nobody knows, of course, but while I’m at it I will take a look at one more that I completely overlooked.

In Apr 2013, I lost just over 21% when the combo index benchmark was down less than 2%. This is a unique situation because it happened in gold (GC). I was dabbling in some small trades just to get a feel for the market and I committed the fatal sin of doubling down and then basically doubling down again. This was particularly embarrassing because the equity market was doing fine—only a niche market paid attention by a few was getting destroyed, and I was part of the few.

Could a case have been made to exit now?

According to Reuters, this combined with the bloodshed during the next trading session was the worst 2-day gold rout in 30 years. I learned some powerful lessons from this (e.g. quit doubling down and mind stop-losses).

When things get crazy, I need to get out of the way. How to determine the craziness may not matter so much as just steering clear. Many potential indicators could be used with varying degrees of success.

You may have noticed my combo index benchmark (RUT and SPX) is not suitable for GC. I should probably correct this, and any correction will improve my relative performance. If I somehow integrate GC to the benchmark for a period of time, then the benchmark will suffer. Even if I underperform GC, GC significantly underperforms the benchmark portion it replaces.

I say “somehow” because determining how much to allocate to the GC trades is difficult. This is a leveraged, speculative [naked put] play that starts very small and goes horribly awry. The fairest way to proceed seems to be determining the margin requirement at max position size, and calculating the equity portion as a fraction of the total portfolio.

This experience scarred me to a point where I still have occasional trouble to this day. I will trade a new strategy in the smallest of size, which is good. When I take losses, though, then my interest wanes and I wait before trying the new strategy again (if ever). That is bad!

I feel quite confident to say this will not happen again. I no longer fight extra hard to avoid losses, and dramatically increasing trade size would be anathema to me.

As much as I might like to pretend the GC disaster didn’t happen, I certainly cannot justify excluding the trades because I saw them through and suffered heavy losses for which I should be held accountable.

I will continue next time.

Categories: Accountability | Comments (0) | Permalink2021 Performance Review (Part 8)

Posted by Mark on April 18, 2022 at 07:20 | Last modified: February 12, 2022 09:29I left off discussing drawdown (DD) statistics and my dissatisfaction with this aspect of my trading performance. My current maximum DD is the best it will ever be.

The DD [second] graph shown in Part 7 does suggest my risk management to be getting better. Since late 2013, benchmark pullbacks over 15% have been less for my portfolio: -11% vs. -18% in Jan 2016, -15% vs. -23% in Dec 2018, and -16% vs. -21% in Mar 2020. I may be even better on a daily basis than these end-of-month values reflect. In 2020 when SPX drops 34% (bottoming out on March 23), my account equity is down less than 12%.

I feel more risk-averse and cautious as I get older. This is a main reason why I made significant changes to my trading strategy in Dec 2018 to carry insurance at all times. Some people say as a mean-reverting asset class, volatility should be sold when high. A caveat is the rare case when “high” is a prelude to much higher: a chance I no longer wish to take.*

The big DDs are truly permanent losses and therefore worth some price (see this post) to avoid. My account can still reach a new post-DD high, but it won’t be as high as it would have otherwise been.

I’d like to present a few of the big drawdown periods along with the million-dollar question: is there anything I can do to avoid these kinds of equity drops?

In the May 2010 Flash Crash, I lose 32.77% with the combo index benchmark down 10.23%. I’ve heard a lot of traders say “the writing was on the wall” for this one. What do you think?

If this is going to be my signal, then I need to be ready to take action immediately. I should also be prepared for a near-term profit vacuum due to lag time before I get a full position in place once again.

On the upside: “cash is a position too.” On the downside: “opportunity cost, baby.”

At first glance, the volatility story ahead of the Flash Crash is somewhat equivocal. Only in hindsight is closing up shop and preparing for the worst an easy decision.

Aug 2011 is catastrophic with a loss just over 50% vs. the combo benchmark down half that. Do you see what comes next?

This is much less convincing to me from a technical standpoint. The volatility story, however, is unequivocal.

Neither of these are smooth sailing, per se, but if sufficient to sound the alarm then how many profitable opportunities will be missed and how will that affect long-term performance?

It bears repeating: only in hindsight is closing up shop and preparing for the worst an easy decision.

I will continue next time with one more case study.

* — The story may be different for a client who hires me to manage 10-20% of their portfolio

and target outsized returns knowing the most they can lose is a fraction of their total.

2021 Performance Review (Part 7)

Posted by Mark on April 14, 2022 at 07:22 | Last modified: February 10, 2022 17:45Last time, I decided to exclude results from my 2001 – 2007 stock trading years. I also settled upon a custom benchmark combination of RUT/SPX to more closely parallel my trading vehicles. I am finally ready to delve into the numbers.

Here is my performance record as a full-time option trader since 2008:

Since 2008, I have posted an average annual return of 15.17% vs. 10.75% for the combo index benchmark: outperformance of at least (see fifth-to-last paragraph here) 4.42% per year. A Motley Fool article from 2/1/22 writes:

> The S&P 500 gained value in 40 of the past 50 years, generating an average annualized return of 9.4%.

If sold, taxes are still due on these funds in the form of LTCG. The same is true for the combo index benchmark (green) and my red (penalized for active trading) equity curve.

My non-penalized (blue) equity curve simply shows taxes do matter (my annualized return would be 16.59% sans penalty). I think inquiring about tax implications and trading frequency is an integral part of due diligence on potential investment advisors (IA), money managers, or strategy vendors.

Pop quiz: does the blue curve apply if done in a Roth IRA?

Answer: please consult a tax professional for specifics about your individual situation. From my understanding, though, no tax liability remains on after-tax funds, which means the blue curve underestimates equity. The combo index benchmark still owes taxes in the form of LTCG (unless eligible for step-up at death, perhaps).

I would be elated to maintain benchmark outperformance of 4.42% p.a. if claims that most IAs underperform are true.

Total return does not tell the whole story, however. I believe drawdown (DD) is equally important for performance evaluation (see last paragraph here):

My maximum drawdown (MDD) is -50.10%, which is slightly worse than the benchmark’s -48.39%. That does not take place during the financial crisis, which I manage well. My MDD occurs in Aug – Sep of 2011 with the benchmark only down 25%. My average DD across all months is -8.86%, which is a bit worse than the benchmark’s -7.17%.

I am happy with my total return, but not so much with my DD statistics (risk management). Unfortunately, as part of the historical record I cannot improve on MDD. Going forward, I can work to exit sooner if I see signs of market turmoil. If the market falls more than 50.1% at some point and my portfolio falls less (or gains), then I can jump ahead on a relative basis in terms of MDD. These efforts may or may not succeed, though. There are no guarantees.

I will continue next time.

Categories: Accountability | Comments (0) | Permalink